Your Pre-Employment Assessment Is Worse Than a BuzzFeed Quiz. I Have the Research to Prove It.

A client asked me to audit their hiring process. They were struggling to fill a senior role and couldn't figure out why their candidate pipeline kept drying up. Good people would apply, start the process, and then disappear. They wanted to know what was going on.

I found it in about fifteen minutes.

Before any candidate got a phone screen, before a recruiter even opened their resume, the company's applicant tracking system sent an automated email with two links: one to a cognitive assessment and one to a personality inventory. Every applicant, regardless of seniority or experience, had to complete both before a human being at the company would look at them.

The cognitive test was pattern matching, spatial reasoning, vocabulary completion, "which of these shapes does not belong." Math without a calculator. SAT-style word questions. Senior leadership jobs are built on relational judgment, cross-functional communication, and the ability to operate in ambiguity, and the first gate in the process was a ninth-grade standardized test.

(answer’s at the bottom of the blog post)

Then came the personality assessment. "I pay my bills on time." "People talk about me behind my back." "I am lonely." "I get up easily in the morning." "I do things to attract attention." "I have many friends."

We all know the right answer to this one is "Sometimes True and Sometimes False." So what is the point of asking these questions in the first place? I'm paranoid if I pick "Always True" and delusional about how human beings work if I pick "Always False." How does any of this tell you ANYTHING about who I am as a colleague, a leader, or a person who can do this job?

I told them the leak was obvious: their best candidates were closing the tab.

I broke the personality test in ten minutes

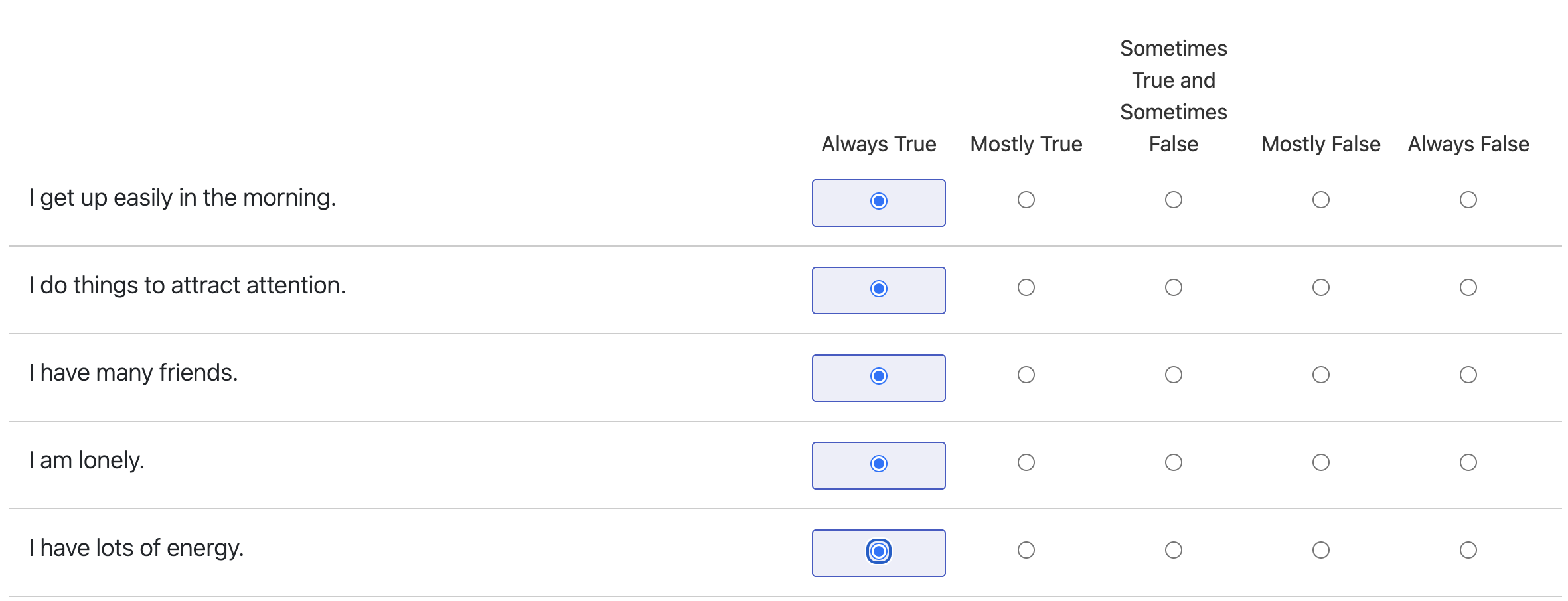

I wanted to show the client what they were actually buying with this assessment, so I ran it myself. Three times. Once answering "Always True" to every question. Once answering "Always False." And once picking the middle option across the board.

I’m a morning person who loves attention and has tons of friends but I’m lonely. This quiz will DEFINITELY tell you that I’m really good at cross-functional communication and execution. Obviously people with a lot of friends are…..uh……good at……um……synergy.

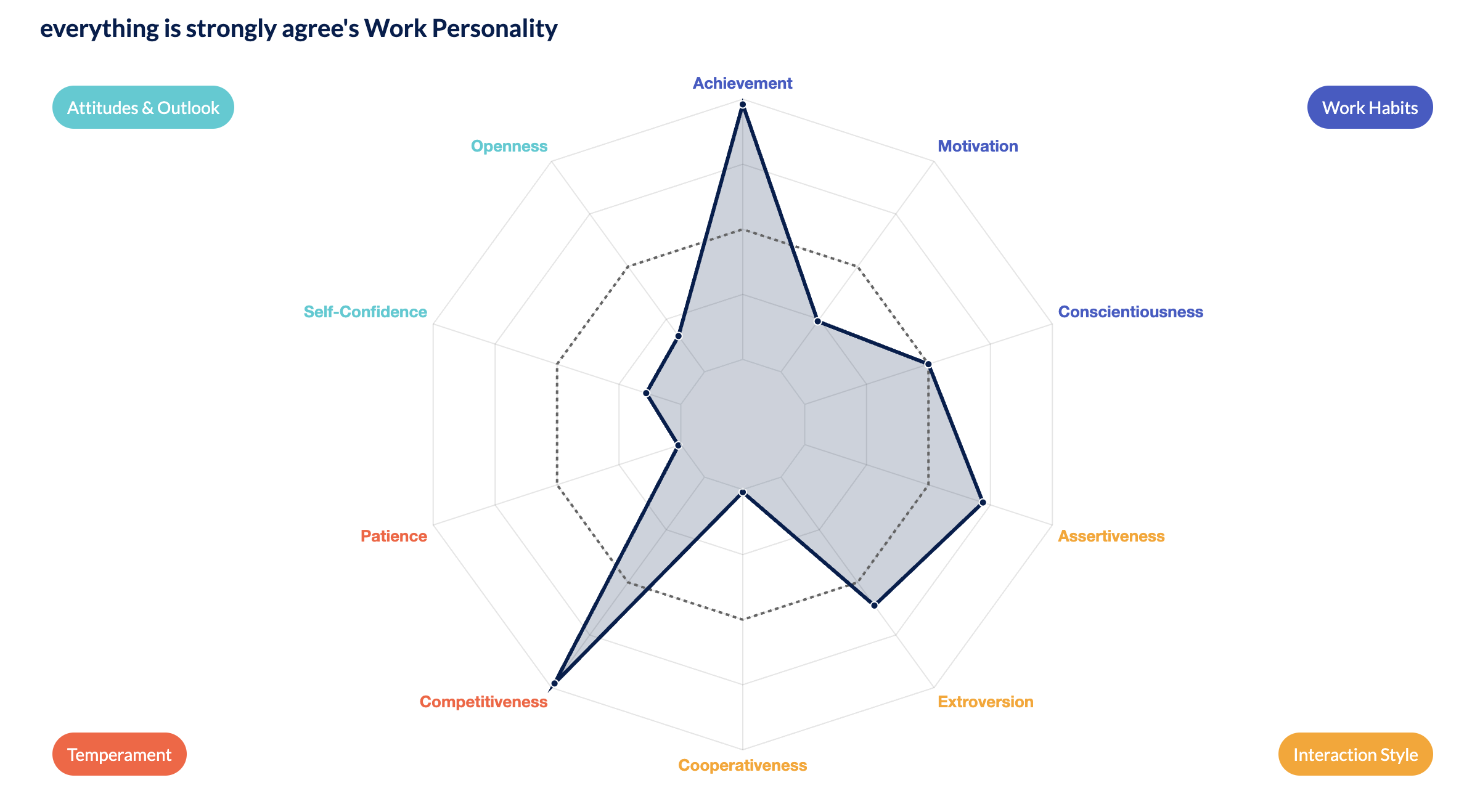

The system generated three distinct "Work Personality" profiles, complete with cool looking radar charts across ten dimensions: achievement, motivation, conscientiousness, assertiveness, extroversion, cooperativeness, competitiveness, patience, self-confidence, and openness.

"Always True" produced an achievement-obsessed competitive maniac with zero patience and the cooperativeness of a feral cat. "Always False" generated an endlessly patient cooperator with no apparent interest in achieving anything. "Ambivalent About Everything" landed in a lukewarm middle blob that somehow STILL had strong opinions about cooperativeness, despite the fact that I told the test I felt nothing about anything.

You're super competitive... with... no motivation. :(

This is the tool that was deciding whether experienced leaders were worth a phone call, somehow based on whether I pay my bills and have friends and if people ever talk behind my back.

Y I K E S.

RESEARCH!

The best available evidence on what predicts job performance comes from Sackett et al.'s 2022 meta-analysis, published in the Journal of Applied Psychology. This was a landmark study that re-analyzed decades of hiring research with more rigorous statistical methods than previous work, and its findings should make anyone relying on these assessments incredibly uncomfortable.

Structured interviews are the strongest predictor of job performance, with the highest mean operational validity of any selection tool tested. Job knowledge tests came in second. Work sample tests ranked high.

Personality assessments performed poorly. The highest validity coefficient for any Big Five personality dimension is conscientiousness, and even that clocks in at a correlation of about .20 with job performance. The other four dimensions (extroversion, agreeableness, openness, emotional stability) hover between .07 and .15. A .20 correlation means conscientiousness explains roughly 4% of the variance in someone's job performance. Four percent. The rest of the Big Five explains between 0.5% and 2%.

A BuzzFeed quiz called "The Bagel You Choose Will Tell You What Kind of Company You Should Work For" has about as much predictive validity for job performance as these personality inventories. I'm not being hyperbolic, the math bears that out. At least BuzzFeed doesn't pretend an everything bagel means you're a strategic thinker.

But I am definitely an everything bagel, in case you were curious.

Cognitive ability tests fared better than personality, but far worse than the industry has been claiming for decades. For years, the gold standard citation was Schmidt and Hunter's 1998 meta-analysis, which reported a validity coefficient of .51 for general cognitive ability. That number was cited over 6,500 times and shaped hiring practices across entire industries. Sackett's team found that the .51 figure was inflated by statistical overcorrections and was based exclusively on studies that were already over 50 years old at the time of publication. When they re-ran the numbers, cognitive ability dropped from the top predictor to fifth. A follow-up analysis by Griebe et al. (2022), using 21st-century studies, found an even lower observed validity of .16 (corrected to .23), which would drop cognitive ability tests to twelfth among the predictors Sackett examined.

Twelfth.

The "cognitive test" was the twelfth most useful way to figure out if someone would be good at the job. The most useful way was to sit down and talk to them. My client wasn't doing that until after the twelfth-best filter had already thrown away their strongest applicants.

Why companies keep choosing the wrong tool

I see this pattern constantly in the companies I work with. The assessment tools keep getting adopted because they solve a problem that has nothing to do with hiring quality: they solve for the hiring team's bandwidth.

A structured interview takes preparation. It requires trained interviewers and dedicated time. A personality quiz takes five minutes to set up in an applicant tracking system and runs itself forever. The company never has to think about it again. The candidates absorb all the cost: the time, the emotional labor, the weird indignity of having to pick the most correct answer for "I am lonely" for a job where the core competency is organizational leadership.

This is a systems design problem, and I think about it the same way I think about any broken operational process. When you install a measurement system, the first question you should ask is: does this measure the thing I need to know? And the second question is: what does this system communicate to the people inside it?

These assessments fail both. They measure something, but what they measure is mostly test-taking behavior and social desirability bias.

Social desirability bias is one of the most well-documented problems in self-report personality testing. People in high-stakes situations (like, say, applying for a job) consistently skew their answers toward whatever they think the evaluator wants to hear. Research has repeatedly shown that candidates can and do provide inflated self-assessments on personality measures during selection processes.

Five former editors of Personnel Psychology and the Journal of Applied Psychology, who had collectively reviewed over 7,000 manuscripts, convened a panel at the 2004 SIOP conference specifically to address this problem. Their conclusion was blunt: faking on self-report personality tests cannot be avoided, and the very low validity of these tests for predicting job performance makes the whole exercise questionable. The entire field of industrial-organizational psychology has been arguing about this for over twenty years, and the consensus boils down to: yes, people fake these tests, and no, we haven't figured out how to stop them.

Probably because you can't. But that's another argument for another time.

So what these assessments actually measure has almost no relationship to what the company needs to know. And what they communicate to candidates is: we value data collection over human contact. We'd rather auto-score you than listen to you. Your resume, your references, and the work you've done are worth less to us than whatever cool looking chart this quiz produces.

The strongest candidates, the ones with options, the ones you actually want to hire, they read that message loud and clear. And they close the tab. Or, like me, they keep submitting different answers solely to see how the radar chart changes.

It was kinda fun.

Candidates: you're better than this. Close the tab.

If a company asks you to complete a personality inventory and a cognitive test before they'll get on the phone with you, that is information. It tells you how they think about people. It tells you that data collection ranks higher than human contact in their priority stack. You are allowed, nay, encouraged to close the tab. You are allowed to decide that a company that won't invest 15 minutes in a conversation before demanding 30+ minutes of unpaid test-taking is telling you something important about what it's like to work there. Trust that information.

Companies: stop weeding out your top candidates with nonsense tests

Structured interviews are the strongest predictor of job performance, according to the best research we have. Work samples and job knowledge tests rank close behind. Case studies and working sessions tell you how someone thinks. Decision walkthroughs, where a candidate walks you through something hard they navigated with all the messy context included, tell you how they operate under pressure. Both of those produce information you can actually use.

All of them require something the quiz-and-radar-chart pipeline does not: treating the candidate like a person whose time and experience are worth engaging with.

The assessments are easy to set up and they produce pretty charts. And I love a pretty chart, but the research says the signal they produce is barely above noise. If your hiring process starts with a personality quiz and a shape-sorting test before a candidate has spoken to a human being, you're not filtering for talent, you're filtering for people who are willing to put up with it.

And that is a very different thing.

Also, the answer was E. A through D have the pattern flipped on the bottom row, E doesn't. Goofy, right?